Compatibility

Minecraft: Java Edition

Platforms

Tags

Creators

Details

GlobalChatTranslator

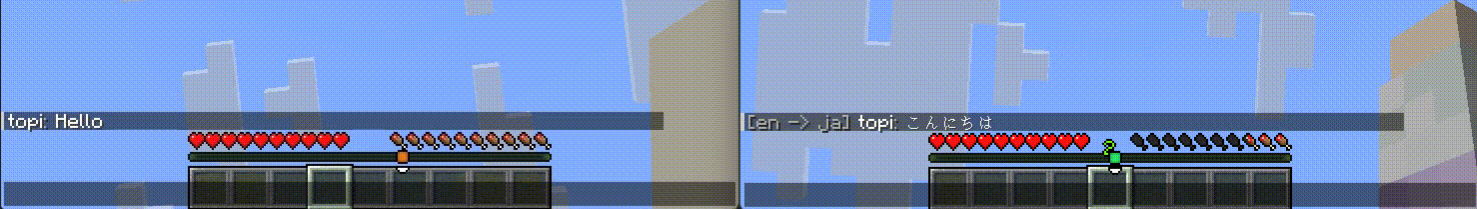

A Minecraft plugin that translates player chat messages in real-time using AI. Players can set their preferred language individually, and messages are automatically translated for each recipient — enabling seamless communication across language barriers.

A Minecraft plugin that translates player chat messages in real-time using AI. Players can set their preferred language individually, and messages are automatically translated for each recipient — enabling seamless communication across language barriers.

Requirements

| Server | Spigot or Paper 1.21+ |

| Java | 21+ |

| LLM provider (pick one) | Google AI Studio API key / OpenAI Platform API key / Self-hosted Ollama instance |

Features

- Real-time translation — Chat messages are automatically translated per recipient based on their language setting, powered by Google Gemini, OpenAI ChatGPT, or a self-hosted Ollama instance.

- Per-player language— Each player sets their preferred language with

/gct lang. Settings persist across sessions. - Skip prefix — Prefix a message with

!(configurable) to send it as-is without translation.

Installation

- Download

GlobalChatTranslator-*.jar - Place the jar file inside the

plugins/directory - Start the server once to generate

plugins/GlobalChatTranslator/config.yml - Edit

config.ymlto set your provider and API key (see Configuration) - Run

/gct reloador restart the server

Providers

Google Gemini

Obtain an API key from Google AI Studio.

provider: "gemini"

gemini-api-key: "YOUR_GEMINI_API_KEY"

gemini-model: "gemini-2.5-flash-lite"

Recommended models: gemini-2.5-flash-lite, gemini-2.5-flash, gemini-2.5-pro

OpenAI ChatGPT

Obtain an API key from the OpenAI Platform.

provider: "openai"

openai-api-key: "YOUR_OPENAI_API_KEY"

openai-model: "gpt-4o-mini"

Recommended models: gpt-4o-mini, gpt-4o, gpt-4.1

Ollama (self-hosted)

Install Ollama and pull a model locally. No API key required. For real-time chat translation, smaller and faster models (2B~4B parameters) are strongly recommended — response latency matters more than output quality.

provider: "ollama"

ollama-url: "http://localhost:11434"

ollama-model: "gemma4:e2b"

Recommended models: gemma4:e2b, gemma3:2b, qwen2.5:3b

Commands

| Command | Permission | Description |

|---|---|---|

/gct lang <code> |

gct.use |

Set your preferred language (e.g. en, ja, es) |

/gct lang |

gct.use |

Show your current language |

/gct reload |

gct.admin |

Reload config.yml and apply changes |

/gct help |

— | Show help |

Language codes

ISO 639-1 codes are supported. Examples: ar cs da de en es fa fi fr hu id it ja ko ms nb nl pl pt ro ru sv th tr uk vi zh

Permissions

| Permission | Default | Description |

|---|---|---|

gct.use |

true |

Allows players to set their language |

gct.admin |

op |

Allows reloading the config |

Configuration (plugins/GlobalChatTranslator/config.yml)

# =============================================

# GlobalChatTranslator - Configuration

# =============================================

# Translation provider to use.

# Supported values: gemini, openai, ollama

# After changing this value, run /gct reload to apply.

provider: "gemini"

# =============================================

# Gemini Settings (provider: gemini)

# =============================================

# API key obtained from Google AI Studio (https://aistudio.google.com/)

gemini-api-key: "YOUR_GEMINI_API_KEY"

# Gemini model to use for translation.

# Recommended: gemini-2.5-flash-lite (fastest, lowest cost)

# Other options: gemini-2.5-flash, gemini-2.5-pro, gemini-2.0-flash, gemini-1.5-flash

gemini-model: "gemini-2.5-flash-lite"

# =============================================

# Ollama Settings (provider: ollama)

# =============================================

# Base URL of your Ollama server.

ollama-url: "http://localhost:11434"

# Ollama model to use for translation.

# The model must be pulled in advance (e.g. ollama pull gemma4:e2b).

# For real-time chat translation, smaller and faster models (2B~4B parameters) are

# strongly recommended over large models. Response latency matters more than accuracy.

# Recommended: gemma4:e2b, gemma3:2b, qwen2.5:3b

ollama-model: "gemma4:e2b"

# =============================================

# OpenAI Settings (provider: openai)

# =============================================

# API key obtained from OpenAI Platform (https://platform.openai.com/)

openai-api-key: "YOUR_OPENAI_API_KEY"

# OpenAI model to use for translation.

# Recommended: gpt-4o-mini (low cost, sufficient quality)

# Other options: gpt-4o, gpt-4.1

openai-model: "gpt-4o-mini"

# =============================================

# General Settings

# =============================================

# Default language assigned to players who have not set a preference.

# Uses ISO 639-1 codes (e.g. en, ja, zh, es, fr).

default-language: "en"

# Format for messages that were translated.

# Placeholders: %source_lang%, %target_lang%, %player%, %message%

# Use & followed by a color code for formatting (e.g. &7 for gray, &f for white).

message-format: "&7[%source_lang% -> %target_lang%] &f%player%&7: &f%message%"

# Format for messages shown to recipients who share the same language as the sender

# (translation is skipped). Same placeholders are available.

same-language-message-format: "&f%player%&7: &f%message%"

# Show a "Translating..." action bar message to the sender while the API is processing.

# Disable this if you prefer a quieter experience or notice display conflicts.

translating-action-bar: true

# Notify players of their current language setting when they join the server.

notify-language-on-join: true

# Messages that begin with this prefix are sent as-is without translation.

# The prefix character is stripped before the message is delivered.

# Set to "" to disable.

skip-prefix: "!"

# Minimum interval between translated messages per player, in milliseconds.

# Players who send messages faster than this rate will have their message blocked.

# Set to 0 to disable.

cooldown: 1000

# Maximum time to wait for an API response, in seconds.

# If the provider does not respond within this time, the original message is

# delivered untranslated rather than dropped.

api-timeout: 10

# Log raw API request and response bodies to the console.

# Useful for diagnosing translation failures or unexpected output.

# Disable in production as API keys may appear in the output.

debug: false